The Surprising Effectiveness of Noise Pretraining for Implicit Neural Representations

Kushal Vyas Alper Kayabasi Daniel Kim Vishwanath Saragadam Ashok Veeraraghavan Guha Balakrishnan

CVPR, 2026

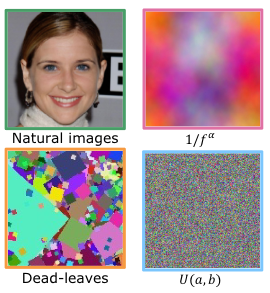

We show that INRs pretrained on structured noise ( e.g. noise with 1/f spectral structure ) and unstructured noise (random uniform, gaussian) are excellent signal fitters. INRs pretrained on unstructured noise yield powerful initialization akin to hash encoding type models, which encourage information maximization, and pretraining on structured noise yields implicit prior equivalent to data driven-models such as INRs pretrained on ImageNet.

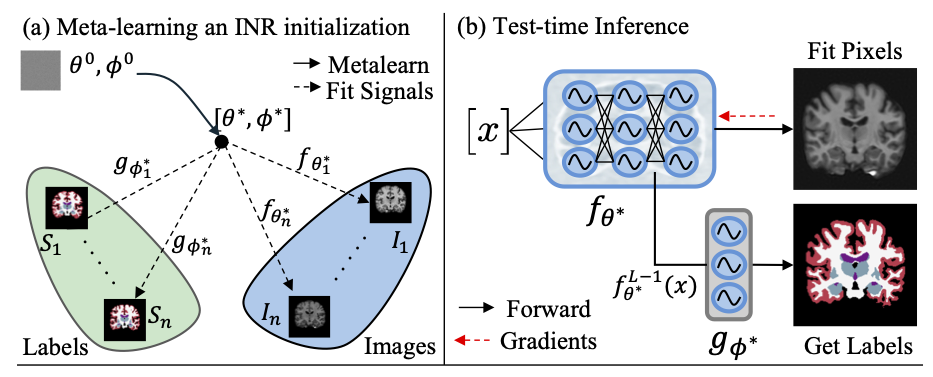

Fit Pixels, Get Labels: Meta-learned Implicit Networks for Image Segmentation (MetaSeg)

Kushal Vyas Ashok Veeraraghavan Guha Balakrishnan

MICCAI (🏆 Best Paper Award, ORAL Presentation), 2025

We showcase MetaSeg, a scalable meta-learned neural representation (INR) that implicitly captures high-resolution per-pixel segmentation by learning a joint cross-task initialization for the INR. At test-time, the INR reconstructs the input signal, while automatically decoding the underlying semantic segmentation. MetaSeg matches the performance of popular data-driven U-Net models for 2D and 3D brain MRI segmentation with 98% fewer parameters.

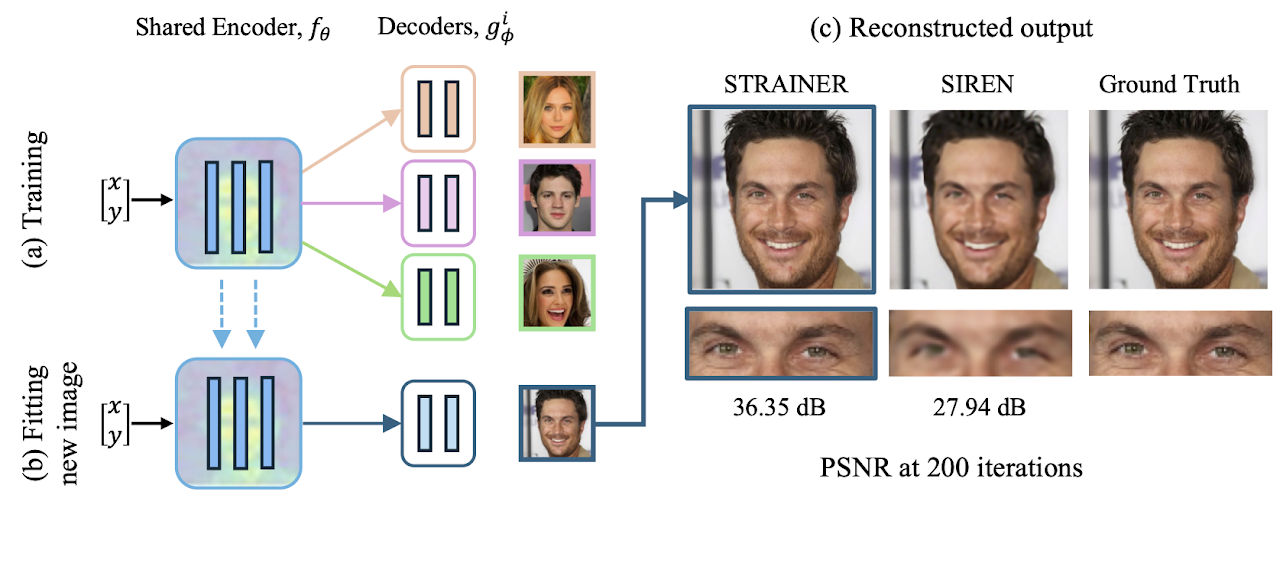

Learning Transferable Features for Implicit Neural Representations

Kushal Vyas Ahmed Imtiaz Humayun Aniket Dashpute Richard G Baranuik Ashok Veeraraghavan Guha Balakrishnan

NeurIPS, 2024

We showcase STRAINER: A framework to learn transferable and generalizable features for implicit neural representations(INRs) by capturing the underlying low-frequency structural prior from limited data extremely powerful for signal fitting and inverse problems. We use only 10 images as training dataset and achieve superior reconstruction quality compared to recent meta-learing and transformer networks.

🏛️ Patents

Granted and pending US patents from applied research at Samsung and Rice University. (Publicly available documents only)